Authority Bias — Meaning, Examples & How to Overcome It

Mind · Cognitive Biases · Social & Self-Perception family

Test yourself — can you spot the bias in each scenario? Take the Cognitive Bias Spotter Test. Jump to the test ↓

What Is Authority Bias? Simple Definition

Authority bias is the tendency to attribute greater accuracy, credibility, or correctness to the opinions and instructions of authority figures — and to be more likely to comply with them — regardless of whether the content of those opinions or instructions actually warrants that deference. We trust the doctor, the expert, the person in uniform, the senior colleague more than we trust our own judgment, even when our own judgment is sound and theirs is not.

The bias operates on two levels: epistemic (believing that authority figures are more likely to be right) and behavioural (being more likely to do what they say). Both levels can produce poor outcomes when the authority is mistaken, compromised, or simply operating outside their actual area of expertise.

This page is part of the cognitive biases guide on our free cognitive training and brain testing platform, alongside interactive tools covering memory, attention, reaction time, and decision-making.

Authority Bias Meaning & Psychology

The psychological basis for authority bias is a combination of social learning, evolved deference to status hierarchies, and the practical efficiency of using expertise as a heuristic. Deferring to people who know more than you is often genuinely rational — it is how children learn, how novices develop skill, and how complex societies distribute knowledge. The problem arises when this rational heuristic is applied indiscriminately, extending to situations where the authority figure is wrong, where they lack expertise in the relevant domain, or where compliance causes harm.

The most influential demonstration of authority bias in psychology came from Stanley Milgram's obedience experiments at Yale University. In the original study, participants were instructed by an experimenter in a lab coat to administer what they believed were electric shocks of increasing intensity to another person whenever that person gave an incorrect answer. The shocks were not real, but participants did not know this. Milgram found that 65% of participants continued to administer shocks all the way to the maximum level labelled "Danger: Severe Shock" — despite the apparent distress of the person being shocked — simply because an authority figure told them to continue. As Milgram (1963) described it, ordinary people, simply doing their jobs, could become agents in a destructive process when instructed by a figure of perceived authority.

Cues that trigger authority bias

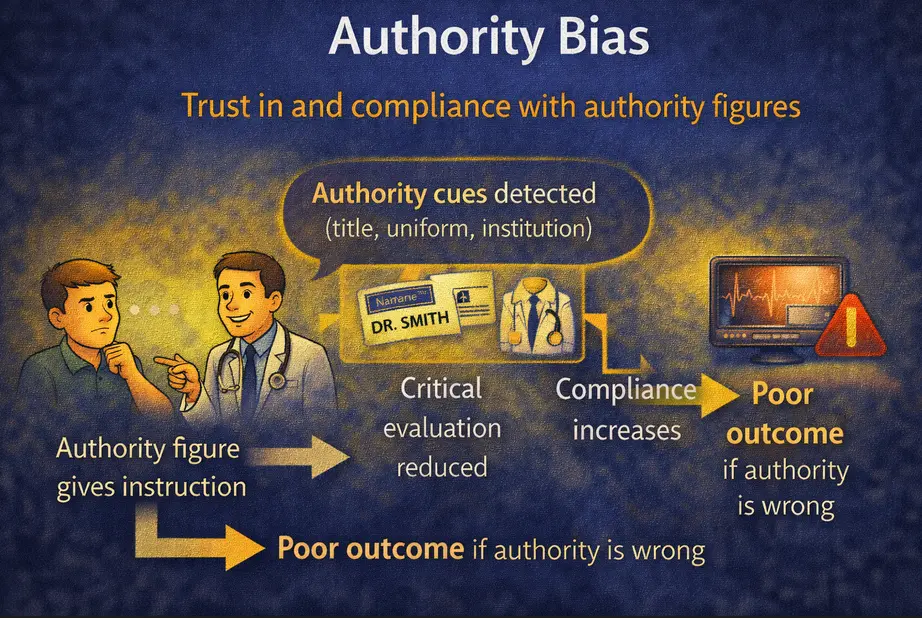

Authority is signalled not only by genuine expertise but by a range of surface cues: titles (Doctor, Professor, Director), uniforms, institutional affiliation, confident tone, physical height, and social status. People respond to these cues largely automatically, adjusting their deference based on perceived authority rather than verified competence. This means authority bias can be triggered by symbols of authority in the complete absence of actual expertise — a finding with significant practical implications for how people make decisions in medical, legal, financial, and organisational contexts.

Authority bias: detected cues such as title, uniform, and institution reduce critical evaluation and increase compliance — producing poor outcomes when the authority figure is wrong.

Authority Bias in Real Life — Examples

In medicine, authority bias is one of the factors behind the persistence of medical errors. Junior doctors and nurses frequently notice problems with a senior clinician's plan but do not raise them, deferring to the authority of the more senior figure. Studies of aviation accidents have shown a similar pattern: co-pilots who notice the captain making an error often fail to intervene decisively, with fatal consequences. The aviation industry's response — adopting Crew Resource Management training specifically to reduce hierarchy-based deference — is one of the clearest examples of a systematic institutional intervention against authority bias.

In the workplace, authority bias causes employees to implement decisions they privately believe are wrong, to not challenge poor reasoning in meetings when it comes from senior figures, and to attribute more weight to the opinions of managers than the evidence warrants. Organisations in which authority bias is strong tend to receive less useful information from front-line employees and to make more errors that could have been caught if hierarchy had been less inhibiting.

In consumer contexts, authority bias drives the effectiveness of endorsements. A product endorsed by someone with a title or institutional affiliation — or even just wearing a white coat in an advertisement — is judged more credible than the same product without that endorsement, even when consumers are aware that the endorsement is paid. The title "Dr." before a name in a health product advertisement increases purchase intent significantly, regardless of whether the claimed expertise is relevant to the product.

Authority Bias in Medicine and Patient Safety

The medical context is where authority bias has some of its most consequential effects. Patients frequently accept medical recommendations without seeking to understand the reasoning behind them, the evidence supporting them, or the alternatives available. This is not always harmful — medical expertise is real and the division of labour is sensible — but it becomes harmful when patients do not ask about side effects, do not seek second opinions for serious diagnoses, do not report symptoms they find embarrassing or minor, and do not question recommendations that seem inconsistent with information they have from other sources.

Among healthcare professionals, the hierarchy of medicine creates conditions in which authority bias can suppress safety-critical information. A nurse who suspects a patient is being given the wrong medication may hesitate to raise this with a consultant, deferring to the consultant's presumed expertise and status. This hesitation is a direct product of authority bias — the assumption that the more senior person is more likely to be right, even when the evidence suggests otherwise.

Authority Bias and Expertise: A Critical Distinction

Authority bias involves a confusion between authority and expertise. Genuine expertise — the product of sustained engagement with a domain, verified by evidence and outcomes — is worth deferring to in proportion to the evidence of its quality. Authority, by contrast, is a social status that can be conferred by title, appearance, institutional position, and confident presentation without any corresponding expertise. The person with the most impressive credentials is not always the most competent in the specific situation at hand, and the person without credentials is not necessarily wrong.

The critical error that authority bias promotes is treating authority as a proxy for correctness rather than as a signal worth weighting alongside evidence and reasoning. This distinction connects closely to the Dunning-Kruger effect: genuinely expert people often express more uncertainty, acknowledge the limits of their knowledge more readily, and are less likely to project the confident authority that triggers the bias. Surface confidence and title are frequently imperfect signals of genuine competence.

How to Avoid and Overcome Authority Bias

Separate the claim from the source

When evaluating a claim or instruction from an authority figure, try to assess the substance of the claim independently of who is making it. Ask: what is the evidence for this? What would I think if someone without this title or status said the same thing? This is not the same as dismissing expertise — it is the practice of evaluating expertise by its actual outputs rather than its social signals.

Ask questions rather than simply complying

In practice, the most effective counter to authority bias is the habit of asking clarifying questions rather than accepting instructions without understanding. "Can you help me understand why this is the recommended approach?" is a question that respects expertise while also activating critical engagement. It is harder to blindly comply with a recommendation you have engaged with than one you have simply received.

Notice when authority is outside its domain

Authority is domain-specific. A brilliant surgeon may have no special competence in financial matters; a renowned economist may know nothing about nutrition. Authority bias frequently involves extending deference beyond the domain in which the authority figure actually has expertise. Making the domain of expertise explicit — and checking whether the current situation falls within it — reduces inappropriate generalisation of authority deference. This connects to related patterns in overconfidence and confirmation bias, where we attend selectively to information that confirms the authority's view while discounting contradictory evidence.

Cultivate institutional structures that counteract authority bias

Individual awareness is not enough in high-stakes environments. The aviation industry's adoption of CRM protocols, the medical profession's development of structured safety checklists, and the use of anonymous reporting systems for safety concerns are all institutional responses to authority bias. These structures work by creating explicit permission — and expectation — that lower-status people will raise concerns about the decisions of higher-status people. They reduce the social cost of challenging authority and thereby reduce the suppression of useful information that authority bias otherwise produces.

The Deeper Point

Authority bias is not simply a failure of critical thinking. It is the over-extension of a heuristic that is often genuinely useful — deference to expertise saves time and is frequently correct. The problem is that the heuristic operates on social signals rather than on verified competence, and so produces deference that is calibrated to the appearance of authority rather than to its actual quality. The result is systematic under-weighting of one's own judgment and evidence-based reasoning in favour of whoever has the most impressive title or the most confident presentation.

Understanding authority bias does not mean distrusting all experts or refusing all deference. It means developing a more accurate model of when and how much to defer — one that weighs authority as one signal among several rather than as a trump card that ends the need for further evaluation. That more calibrated relationship with authority is harder to maintain than either blanket deference or blanket scepticism, but it produces better decisions.

Related biases worth exploring alongside this one: halo effect, which extends positive attribution from one domain to unrelated domains, similarly to how authority is overgeneralised; in-group bias, which increases deference to in-group authorities and scepticism toward out-group ones; and overconfidence effect, which is the mirror image — the failure to accurately recognise the limits of one's own expertise.

The Cognitive Bias Spotter Test below puts that understanding to work — see if you can identify authority bias and the other nine biases when they appear in realistic scenarios.