Bias Blind Spot — Meaning, Examples & How to Overcome It

Mind · Cognitive Biases · Self-Perception family

Test yourself — can you spot the bias in each scenario? Take the Cognitive Bias Spotter Test. Jump to the test ↓

What Is the Bias Blind Spot? Simple Definition

The bias blind spot is the tendency to recognise cognitive biases in other people's thinking more readily than in your own. You can identify when others are being influenced by confirmation bias, motivated reasoning, or self-serving attribution — but when the same biases operate in your own thinking, you tend not to notice them, or to conclude that your thinking is an exception.

In plain terms: everyone is biased, and everyone knows that everyone is biased — but almost everyone believes they personally are less biased than average. The bias blind spot is not just a failure to notice your own biases; it is the active, confident belief that you are seeing things more objectively than you actually are.

This page is part of the cognitive biases guide on our brain training and cognitive testing platform, alongside interactive tools covering memory, attention, reaction time, and decision-making.

Bias Blind Spot Meaning & Psychology

The bias blind spot was identified and named by Pronin, Lin & Ross (2002) in a series of three studies examining how people assess their own susceptibility to cognitive bias compared to others. In the core finding, participants rated themselves as less subject to a range of well-known biases — including the halo effect, the confirmation bias, and self-serving attribution — than the average American, than their classmates, and than fellow airport travellers. Crucially, when participants were shown a description of a bias they had themselves exhibited and asked whether it could have influenced their judgment, they consistently denied it while acknowledging that it likely influenced others.

A follow-up study found that this denial was not mere social performance — participants genuinely believed their self-assessments were accurate and unaffected by bias, even when the evidence that they had been biased was directly in front of them. The bias blind spot is not a lie; it is a sincere misperception.

The introspection illusion

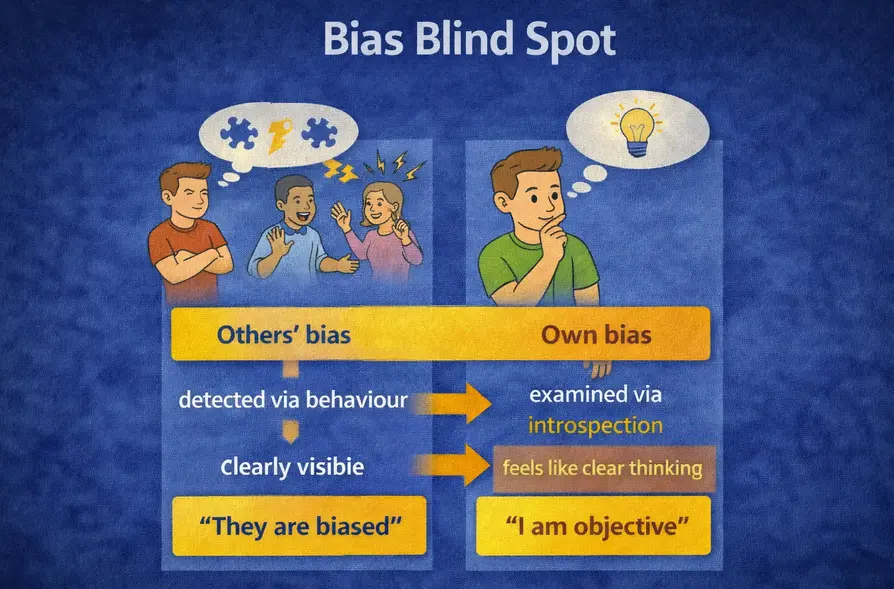

The underlying mechanism is what researchers call the introspection illusion. When judging whether someone else is biased, people rely on observable behaviour and outcomes — they look for evidence in what the person said or did. When judging whether they themselves are biased, people look inward — they examine their own thoughts, intentions, and motivations. The problem is that bias does not feel like bias from the inside. Motivated reasoning feels like careful thinking. Selective attention feels like noticing what matters. Biased memory feels like accurate recall. Because the experience of being biased is indistinguishable from the experience of thinking clearly, introspection provides no reliable signal of bias — but people trust it as if it did.

This creates a structural asymmetry: the tools people use to detect bias in others (behaviour, outcomes) are more effective than the tools they use to detect it in themselves (introspection). The result is a systematic tendency to see more bias in others and less in oneself.

The bias blind spot: others' bias is detected through behaviour and is clearly visible, while own bias is examined through introspection — where it feels like clear thinking, producing the conclusion "I am objective."

Bias Blind Spot in Real Life — Examples

The bias blind spot is one of the most pervasive and consequential cognitive biases precisely because it undermines the ability to correct other biases. If you cannot recognise that a bias is operating in your own thinking, you cannot take steps to account for it. Every other cognitive bias on this page is harder to overcome because the bias blind spot makes people less likely to believe it applies to them.

In everyday disagreements, the bias blind spot manifests as the universal tendency for each party to perceive themselves as reasoning objectively and the other party as being driven by emotion, self-interest, or ideology. Each person in a dispute typically believes their own position is based on the facts and the other's is based on bias. Both cannot be right simultaneously — but the bias blind spot makes this asymmetric perception feel accurate from both sides.

In political contexts, the bias blind spot is one reason why political polarisation is self-reinforcing. Each side of a political divide perceives the other as biased and itself as clear-sighted, which makes it harder to engage seriously with opposing evidence and easier to dismiss disagreement as the product of the other side's cognitive failings rather than genuine differences in values or interpretation.

Bias Blind Spot in the Workplace

Professional environments are particularly susceptible to the bias blind spot because they tend to reinforce the belief in one's own objectivity. Experts, analysts, and decision-makers are trained to be rigorous, and this training is often interpreted — wrongly — as protection against bias. Research consistently shows that expertise does not eliminate cognitive bias and sometimes amplifies it, because confident experts are more likely to trust their intuitions and less likely to subject them to scrutiny. The bias blind spot means that the most experienced decision-makers are often the least likely to recognise when their confidence is the product of motivated reasoning rather than genuine insight.

In hiring and performance evaluation, the bias blind spot leads evaluators to believe their assessments are objective even when they are influenced by factors unrelated to the candidate's actual performance — appearance, name, accent, or shared background. When this is pointed out, the evaluator typically acknowledges that others might be influenced by such factors while maintaining that their own assessment was not. This is the bias blind spot in direct operation.

Bias Blind Spot in Medicine and Law

Medical decision-making is significantly affected by the bias blind spot. Physicians who are shown evidence of systematic diagnostic biases in their specialty — such as anchoring on an early hypothesis, or applying different diagnostic thresholds to patients of different demographics — typically accept the evidence at the group level while believing their own clinical judgment is an exception. Studies on conflicts of interest in medicine similarly find that physicians who receive gifts or payments from pharmaceutical companies consistently believe such payments do not influence their prescribing behaviour, despite strong evidence that they do.

In legal settings, the bias blind spot affects judges, jurors, and attorneys who believe their assessments of evidence are more objective than they actually are. A judge who is aware of research on racial disparities in sentencing may still believe their own sentencing decisions are unaffected by race, because introspection reveals no biased intent — which, as the research on the introspection illusion shows, is exactly what you would expect whether or not bias is present.

How to Avoid and Overcome the Bias Blind Spot

Accept bias as the default, not the exception

The most fundamental shift required to address the bias blind spot is to stop treating bias as something that happens to other people and start treating it as the default condition of human cognition — including your own. The question is never "am I biased?" but "which biases are most likely operating here, and how might they be affecting my thinking?" This reframing makes it possible to take protective measures rather than concluding that protection is unnecessary.

Use structured processes rather than relying on introspection

Because introspection is an unreliable detector of bias, the most effective protection is to use structured decision-making processes that reduce the scope for bias to operate, rather than trying to detect and correct for it after the fact. Pre-commitment to criteria before evaluating options, blind review of applications and assessments, and explicit consideration of alternative explanations all reduce reliance on the biased introspective sense that your judgment is objective.

Seek external feedback actively

Since behaviour and outcomes are more reliable indicators of bias than introspection, external feedback from people who can observe your decisions and their results is more valuable than internal reflection for detecting your own biases. This requires both seeking that feedback and being genuinely open to it — which the bias blind spot itself makes difficult, because feedback that points to your own bias can feel like evidence of the other person's bias against you.

Study your own track record

A decision journal that records predictions, reasoning, and outcomes over time provides exactly the kind of behavioural evidence that is more effective than introspection for detecting bias. Patterns in your past decisions — systematic overconfidence, recurring blind spots, consistent errors in particular domains — are visible in the record of outcomes even when they are invisible to introspection. This is the same practice recommended for hindsight bias and choice-supportive bias, and for the same reason: it replaces the distorted retrospective account with an objective record.

The Deeper Point

The bias blind spot is in some ways the most important bias on this page, because it is the bias that protects all the others. If you believe you are less susceptible to cognitive bias than the average person — which most people do, by definition impossibly — then learning about cognitive biases becomes primarily an exercise in understanding other people's errors rather than your own. The knowledge is real but the application is absent.

Genuine engagement with cognitive bias requires accepting the uncomfortable conclusion that the biases you are most confident are not affecting you are precisely the ones you cannot detect through introspection. The feeling of objectivity is not evidence of objectivity. The absence of a felt sense of bias is not evidence of its absence. And the fact that you can clearly see bias in someone who disagrees with you is not evidence that you are seeing more clearly — it may simply be evidence that the bias blind spot is working exactly as described.

Related biases that interact closely with this one: confirmation bias, which the bias blind spot makes harder to detect in oneself; the Dunning-Kruger effect, where limited self-knowledge produces overconfidence in one's own competence; and the false consensus effect, which similarly leads people to overestimate the objectivity and representativeness of their own perspective.

The Cognitive Bias Spotter Test below puts that understanding to work — see if you can identify the bias blind spot and the other nine biases when they appear in realistic scenarios.