Normalcy Bias — Meaning, Examples & How to Overcome It

Mind · Cognitive Biases · Risk & Decision family

Test yourself — can you spot the bias in each scenario? Take the Cognitive Bias Spotter Test. Jump to the test ↓

What Is Normalcy Bias? Simple Definition

Normalcy bias — also called normality bias — is the tendency to underestimate both the likelihood and the potential impact of a disaster or disruptive event. When faced with warnings of an unusual or unprecedented threat, people assume that things will continue as they normally have, that the situation will resolve itself, and that the disruption will be less severe than it actually turns out to be.

In plain terms: because disasters have not happened before — or have not happened to you personally — your mind defaults to the assumption that they will not happen now. Warning signs are minimised, preparations are delayed, and evacuation decisions are deferred, all because the brain is anchored to a baseline of normality that the incoming evidence is failing to shift.

This page is part of the cognitive biases guide on our brain training and cognitive assessment platform, alongside interactive tools covering memory, attention, reaction time, and decision-making.

Normalcy Bias Meaning & Psychology

Normalcy bias was formally identified in disaster psychology as one of the key cognitive errors that delay or prevent appropriate responses to emergencies. Omer & Alon (1994) described it as a systematic underestimation of the probability and extent of expected disruption — the mind's tendency to treat the current normal state as the default prediction for the near future, even when evidence of an impending abnormal event is available.

Research on disaster responses has consistently found that approximately 70–80% of people display normalcy bias when facing an emergency. Rather than immediately taking protective action, most people enter a phase of information-seeking and verification — checking with neighbours, watching for others' reactions, and waiting for further confirmation before acting. This process, sometimes called "milling," consumes critical time. Studies of major disasters including the World Trade Center evacuation on September 11, 2001, found that a large majority of survivors spoke with others before evacuating, even as the emergency was already unfolding.

Why the brain does this

Normalcy bias is driven by the brain's reliance on past experience as the primary template for predicting the future. For most of human history, most days have been normal. Threats have been rare. Warnings have frequently been false alarms. The brain has been shaped by this statistical reality to treat normalcy as the default prediction and to require strong, repeated evidence before revising it toward an abnormal scenario.

This makes normalcy bias closely related to the availability heuristic: people assess the probability of an event by how easily they can recall similar events. If a major hurricane, earthquake, or financial crash has never been directly experienced, it is cognitively unavailable — and therefore feels less probable — regardless of the objective statistical evidence. The brain also takes 8–10 seconds to process new information even when calm, and longer under stress, creating a structural processing lag that compounds the bias.

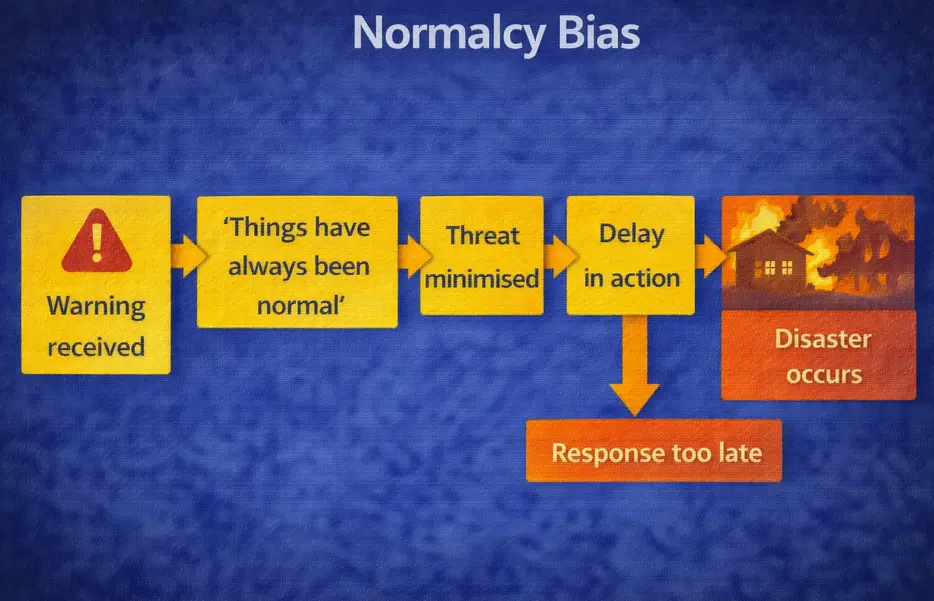

Normalcy bias: a warning is received but the assumption that "things have always been normal" minimises the threat, delay follows, the response comes too late, and disaster occurs.

Normalcy Bias in Real Life — Examples

The history of major disasters is filled with examples of normalcy bias delaying or preventing appropriate responses. When Pompeii was destroyed by the eruption of Mount Vesuvius in 79 AD, many inhabitants remained in their homes as ash began to fall — the volcano had not erupted in living memory, and the signs were interpreted as unusual but not as an existential threat. When the Titanic struck the iceberg in 1912, officers of the White Star Line made insufficient preparations for evacuation partly because a ship of that class sinking was not within their operational model of what could happen. When Hurricane Katrina approached New Orleans in 2005, thousands of residents chose not to evacuate despite mandatory evacuation orders, because they had weathered previous hurricanes without major harm.

In each case, the normalcy bias was not a failure of intelligence — it was the predictable output of a cognitive system anchored to past experience. The events that were coming had no precedent in the direct experience of those affected, and so the default assumption of continued normality persisted against the incoming evidence.

Normalcy Bias in Business and Organisations

Normalcy bias is a significant cause of strategic failure in business. Companies that have operated successfully for extended periods develop deep institutional assumptions about the stability of their environment — their market, their competitive position, their regulatory context. When evidence emerges that these conditions are changing fundamentally, normalcy bias causes decision-makers to interpret the signals as temporary fluctuations rather than structural shifts, and to delay strategic adaptation accordingly.

The response of established industries to digital disruption provides numerous examples. Blockbuster, Kodak, and traditional media companies all received early and clear signals that their core markets were being structurally disrupted. The normalcy bias at the institutional level — the assumption that the core business model would continue to function as it always had — delayed responses until disruption was already severe. The past success of the business model made its eventual failure feel implausible right up until it occurred.

The Fukushima Daiichi nuclear disaster in 2011 is a documented case of normalcy bias at the institutional level. TEPCO and Japanese regulators had operated the plants without a major disaster for decades, and this extended period of normal operation reinforced the assumption that normal operation would continue. Safety upgrades recommended by international bodies were not implemented, partly because the need for them could not be viscerally felt against a backdrop of uninterrupted normality.

Normalcy Bias in Financial Markets

Financial markets exhibit normalcy bias in the period before major crashes. When asset prices have risen steadily for an extended period, market participants develop an increasingly strong expectation that prices will continue to rise — the recent normal becomes the predicted future. Warning signs that valuations are disconnected from fundamentals are systematically discounted as temporary or as already-priced-in, because the baseline of rising prices has become the cognitive default.

This connects closely to recency bias — the overweighting of recent experience in forming expectations — and to availability heuristic effects that make crashes feel less probable precisely because they have not been experienced recently. The longer a market has been calm, the more powerfully normalcy bias suppresses the felt probability of disruption — which is exactly the period when the underlying risk may be accumulating.

Normalcy Bias in Health and Personal Safety

On a personal level, normalcy bias shapes health-related decisions in ways that can be consequential. People who have driven for years without an accident tend to feel invulnerable to road accidents, even as they take risks — distracted driving, speeding, driving tired — that objectively increase their probability. The extended period of normal driving without incident creates the felt sense that such outcomes do not apply to them. Past normal experience becomes a misleading guide to future risk.

The same mechanism operates in health screening decisions. People who have had no symptoms of serious illness for extended periods feel less need to be screened — the absence of past abnormality reinforces the assumption of continued normality. This is precisely the wrong inference from the data, since many serious conditions develop asymptomatically, but it is the inference that normalcy bias reliably produces.

How to Avoid and Overcome Normalcy Bias

Treat warnings as real until confirmed otherwise

The default mental posture that normalcy bias produces is to treat warnings as probably false until confirmed as real. Reversing this default — treating a credible warning as real until confirmed as false — changes the decision calculus in exactly the way that disaster preparedness requires. This does not mean panicking at every alert; it means taking preparatory action early enough that if the warning turns out to be accurate, the window for effective response has not been lost.

Pre-commit to trigger points

One of the most effective countermeasures to normalcy bias is to pre-commit to specific trigger points at which particular actions will be taken, without requiring a fresh decision at the time of the event. Deciding in advance that you will evacuate if an official evacuation order is issued — rather than waiting to see what the situation looks like and whether your neighbours are leaving — removes the in-the-moment decision from the influence of normalcy bias. The same principle applies in financial planning, organisational risk management, and personal health decisions.

Actively imagine the unprecedented

Normalcy bias is strongest for events with no personal precedent. Deliberately imagining in concrete detail how an unprecedented event would unfold — what you would need, what steps you would take, what the consequences of delay would be — makes the event more cognitively available and reduces the gap between its felt probability and its actual probability. This is the direct application of the availability heuristic correction: you cannot rely on recalled experience for novel events, so you must generate the mental representation through deliberate imagination.

Seek outside reference points

Because normalcy bias is anchored in personal experience, it is effectively counteracted by seeking out the experience of others who have encountered the relevant event. Reading first-person accounts of disaster survivors, understanding how similar organisations have responded to structural disruption, or consulting with experts who have direct experience of the type of event being considered, all provide experiential data that the individual's own history lacks. This is the same principle that drives the value of confirmation bias debiasing — actively seeking evidence from outside your own experiential frame.

The Deeper Point

Normalcy bias is in many ways the most dangerous cognitive bias in this series, because it operates most powerfully in exactly the situations where accurate risk assessment matters most. In low-stakes, routine situations, it barely matters that the brain defaults to assuming tomorrow will be like today. In high-stakes, novel situations — where genuine disasters, major disruptions, or unprecedented threats are possible — that same default produces delayed responses, inadequate preparations, and missed windows for protective action.

The bias is also self-reinforcing in a particularly insidious way: every time a warning proves to be a false alarm, normalcy bias is strengthened. The base rate of false alarms is high enough that treating every warning seriously feels like overreaction — and the social pressure not to appear alarmist compounds this. Yet the base rate of genuine disasters, though lower, is not zero, and when they arrive, normalcy bias ensures that many people are still processing whether to take the situation seriously.

Related biases that interact closely with this one: the availability heuristic, which makes personally unexperienced events feel less probable; recency bias, which overweights the recent normal as a prediction of the near future; and confirmation bias, which selectively attends to information consistent with the normalcy assumption while discounting warning signals.

The Cognitive Bias Spotter Test below puts that understanding to work — see if you can identify normalcy bias and the other nine biases when they appear in realistic scenarios.